Content system for behaviour-driven risk interventions across multiple regulated markets.

Designing Unibet's 'Safer Gambling' intervention content system

Context

I led the content strategy for real-time gambling interventions across Unibet, 32Red, and Bingo.com brands. We use ‘Markers of Harm’ (data signals like spending spikes or playing late at night) to trigger safety messages.

My job was to turn these technical alerts into helpful, human conversations. I also built a scalable, compliant communication system that balanced strict multi-market regulations with a clear supportive user experience.

Problem

The existing interventions were great at detecting risky behaviour in real time but our messages and user experience were:

- generic and repetitive, no matter how much a player’s risk had increased

- difficult for users to understand in context

- not clearly aligned to the severity of the user’s behaviour

- not a clear reflection of the complexity of the system

We had a system tracked dozens of data points (like how long someone played or how fast they spent money) and triggered alerts in real time.

The challenge was to turn this into a coherent, scalable user experience. We needed to move from ‘system-led’ alerts to ‘user-led’ conversations that feel supportive, not accusatory. Everything had to work without manual intervention.

My Role

As the Senior UX Content Designer, I was responsible for:

-

Setting the strategy: I defined how we talk to players at every stage, from a friendly nudge to a serious account restriction.

-

Designing the experience: I wrote and structured the content for real-time alerts, emails, and onsite messages.

-

Translating the ‘Why’: I turned complex data and legal rules into clear, human language that players could actually understand.

-

Building a system: I ensured everything worked without manual intervention. This wasn’t just a single flow; it was a system where:

-

content adapted automatically across different risk levels

-

the tone stayed consistent across every channel

-

messages made sense even when seen in isolation

-

-

Managing stakeholders: I worked closely with Legal and Product teams to balance strict regulations with a smooth user experience.

Approach

The overall approach was to:

- turn behavioural signals into plain language

- match the real-time messages to the risk level.

The automated system detected dozens of patterns, like increase in spending, longer gaming sessions, or late night play. But the users may not neccesarily see the ‘markers of harm’ or patterns.

My goal was to:

- explain what’s happening without exposing internal logic

- be transparent without overwhelming

- help users understand the why, not just the restriction.

Solution

I designed a system that scales across different risk levels and channels. This ensured we weren’t just sending random alerts, but managing a clear, automated safety process.

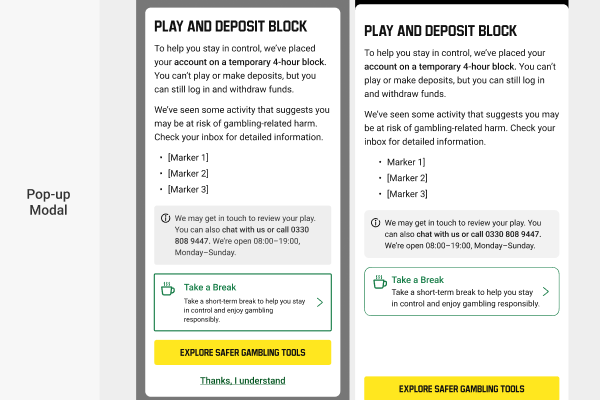

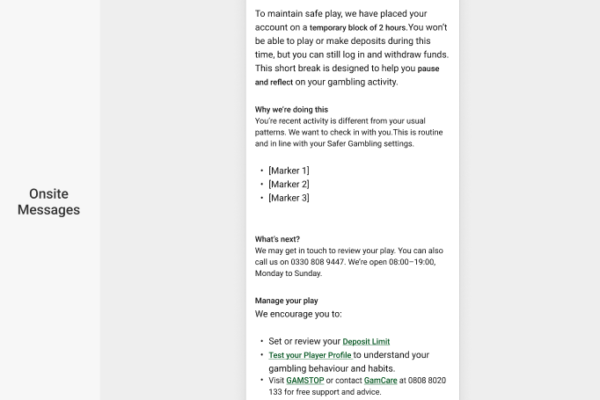

A 4-level risk system with dynamic messaging

I mapped our messaging to the severity of the player’s behaviour. The ‘volume’ of the intervention increased as the risk moved up:

-

Low: Friendly reminders and quiet nudges to stay aware.

-

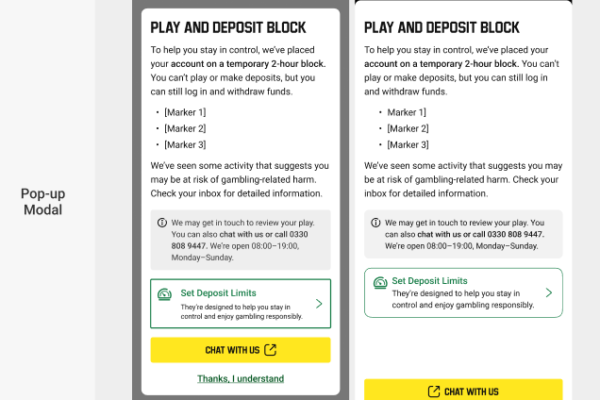

Medium: Short, 2-hour breaks to ‘Pause and Reflect.’

-

Medium-High: Enforced 4-hour pauses to break the momentum of play.

-

High: Full account restrictions pending a safety review.

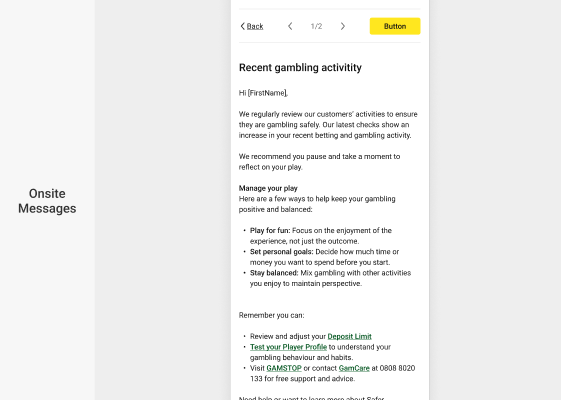

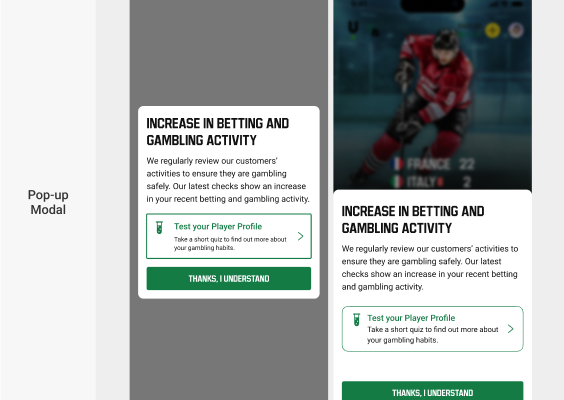

Right message in the right place

In an automated system, the message must make sense wherever the player sees it. I gave each channel a specific job to do:

-

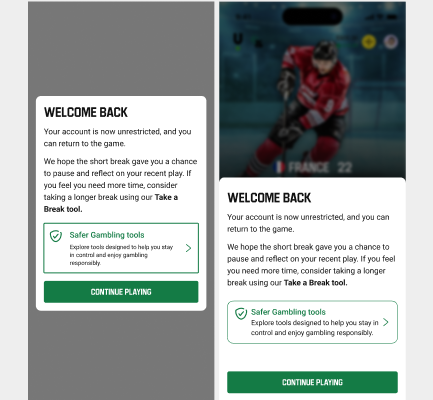

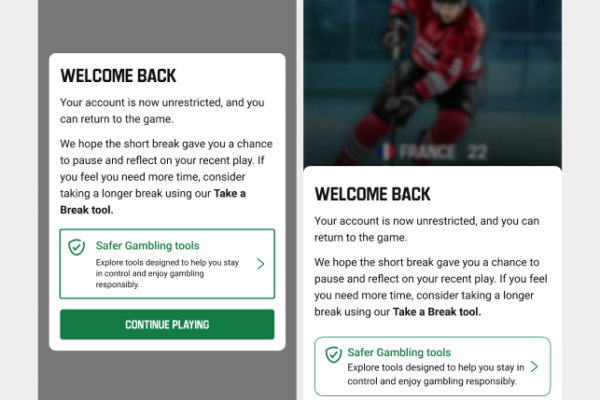

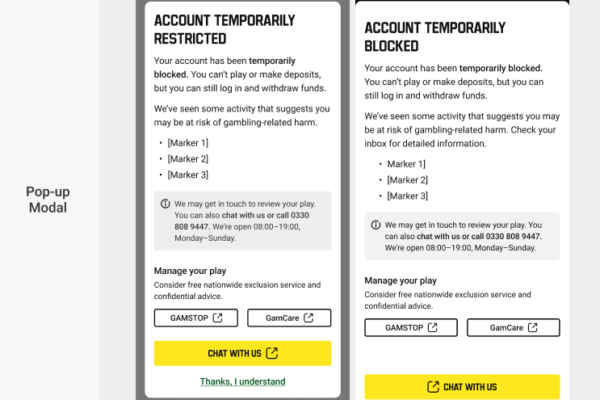

Pop-up messages (modals): These are for immediate action. They ‘stop’ the player mid-task when they need to take a break or read a serious update.

-

On-screen alerts: these are small, helpful reminders that stay visible while the player is using the app. They guide the player toward safety tools without being too pushy.

-

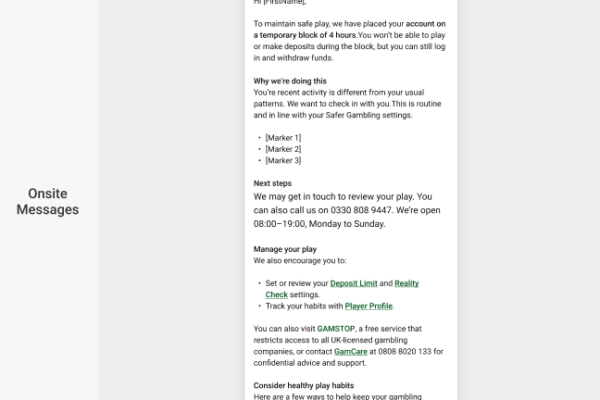

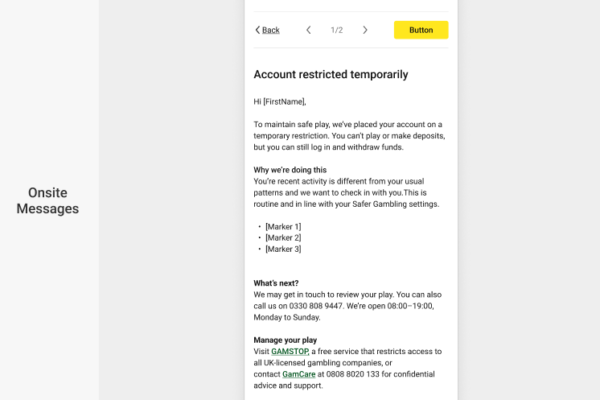

Emails: These provide the ‘official record.’ They give the full story, explain the reasons for the break in detail, and offer links to long-term support.

Helping players help themselves

Every part of the journey was designed to lead the player toward helpful safety tools. Instead of just stopping play, we made it easy to take action:

-

In-app tools: Setting deposit limits, ‘Reality Checks,’ and ‘Take-a-Break’ features.

-

Outside help: Direct links to professional support like GAMSTOP and GamCare.

User testing & Insights

I ran tests with real players to see how they reacted to being interrupted. This wasn’t a one-time thing; I used their feedback to rewrite and improve the messages until they were clear and helpful.

What I learned and improved:

-

Honesty reduces anger: I found players were 40% less likely to call support if the message explained exactly why they were blocked (for example: “You’ve been playing for 6 hours straight”). I iterated on the copy to make these reasons front and centre.

-

Vague is scary: In early tests, generic ‘Safety Check’ messages made players worry they were being accused of fraud. I changed the language to be more transparent, which helped lower their anxiety.

-

Direct links work: Players usually ignored advice to ‘visit the help centre.’ When I changed the design to put a ‘Set a Deposit Limit’ button directly in the message, 65% of players clicked it.

-

Refining the tone: Testing helped me find the right balance, making sure the message was serious enough to stop risky play, but supportive enough that the player didn’t feel attacked.

Managing Stakeholders

In a highly regulated industry, I often had to navigate the tension between legal requirements, business costs, and the user experience.

The Challenge

Because the company faces massive fines for compliance failures, some stakeholders were keen to interpret regulations as strictly as possible. While they meant well, this often led to a confusing or poor experience for the player. On the other hand, some teams wanted to do the bare minimum, meeting the legal requirement technically, but in a way that didn’t actually help the user.

The ‘Help Centre’ Conflict

A clear example of this was when regulations required us to tell players why their account was restricted and give them a way to contact us for more info.

-

The Business Ask: The team wanted to send players to a generic Help Centre home page. Their goal was to protect the call centres from a sudden surge in traffic.

-

The User Problem: Forcing a restricted, often distressed player to hunt for a phone number or chat link is bad for trust and safety. The info was ‘there’, but it was hidden.

-

The Advocacy: I pushed back, showing that helping the user quickly actually benefits the company in the long run by reducing frustration and long-term friction.

The Compromise I couldn’t get a direct phone number on the pop-up modal due to the call centre concerns, so I negotiated a ‘deep-link’ strategy. We still sent users to the Help Centre, but instead of the home page, we landed them directly on the specific section where the live chat and phone numbers were front and centre. This met the legal requirement and protected the call centre while making sure the user wasn’t left in the dark.

What I’d do differently

-

More Testing: I wish I had more time to test different versions of the ‘Welcome Back’ screen to see which button copy worked best.

-

Better Data: I’d like to track which specific phrases made players click ‘I understand’ the fastest.

-

Better ‘Off-boarding’: I would spend more time on the emails sent to users who are permanently banned to make sure they feel supported, not just ignored.

Measuring success

-

Lower Operational Costs: Providing clear, ‘why-based’ messaging led to a significant drop in support tickets. Players no longer had to call just to find out why they were paused.

-

Consistent Compliance: The modular system allows for instant updates. This ensures we stay strictly aligned with shifting UK and EU laws, protecting the company from fines.

-

Positive Behavioural Change: Players were more likely to use safety tools (like setting their own deposit limits) when the suggestion was built into the ‘Welcome Back’ flow.

-

System Scalability: This framework is now the standard for how we handle automated interventions across the UK, France, and Sweden.